1 引言

分裂可行性问题 (Split Feasibility Problem, SFP), 最早于 1994 年由 Censor 和 Elfving[1]提出并命名, 其数学模型是

由文献 [3,命题 3.1] 知, 在解集

其中,

Byrne[6]最早提出投影型数值解法 (CQ 算法), 其迭代方案是

其中,

其中,

三是基于 Armijo 线搜索产生自适应的步长

其中,

根据文献 [3], 在

的解. 本文致力于从凸约束非线性单调方程组 (1.3) 的视角寻找求解和设计 SFP 的新型算法. 这一思想在目前的文献中未见报道.

1964 年, Polyak[23]首次提出了惯性外推技术. 大量的数值实验表明, 惯性外推技术可加快收敛速度. 例如, 针对 SFP, Sahu[24]和 Suantai 等[25]在算法中加入惯性外推技术, 有效的提高了算法的性能, 并具有很好的收敛性. 2022 年, Jian 等[26]提出了非线性伪单调方程组的一簇惯性无导数投影法. 最近, 基于凸组合技术, Jiang 等[27]提出了求解无约束优化的一簇混合共轭梯度法. 基于文献 [26] 的惯性加速技术和文献 [27] 的凸组合及混合技术, 本文提出一个新型惯性共轭梯度投影算法 (ICGPM). 此算法不用计算矩阵

本文余下部分结构如下: 第 2 节给出算法的具体步骤; 第 3 节证明其全局收敛性和

2 惯性共轭梯度投影法

记

算法 1 ICGPM.

步骤 0 任取初始点

步骤 1 若

并计算惯性步

若

步骤 2 计算搜索方向

其中

凸组合参数

步骤 3 采用 Armijo 型线搜索求步长, 即

令

步骤 4 计算下一个迭代点

其中

置

注 2.1 (1) 根据算法 1 易知

(2) 在 (2.5) 式中, 参数

注 2.3 由 (2.1) 式知

注 2.4 Armijo 型线搜索 (2.4) 式取自文献 [14], 其目的是构造分离超平面. 事实上, 由 (2.4) 式知

进而, 由引理 3.2 得

根据

而

由 (2.8) 式和 (2.9) 式知, 超平面

严格分离

3 全局收敛性与线性收敛率

本节分析算法 1 的全局收敛性和线性收敛率. 为此, 给出以下命题及引理.

命题 3.1 设函数

(i) [5]

(ii) [5] 映射

(iii) [4,引理 8.1] 映射

命题 3.2 [3,命题 3.1] 设 SFP 的解集

(i)

(ii)

(iii)

命题 3.3 对任意

命题 3.4[28] 设

引理 3.1 设

进而, 对任意

及信赖域性质

证 由 (2.3) 式知

其中,

故 (3.3) 式得证.

下面证明 (3.4) 式. 当

当

其中, 上面第一个不等式利用了柯西-施瓦茨不等式及

接下来, 证明 (3.5) 式. 当

当

故 (3.5) 式得证. 证毕.

引理 3.2 算法 1 步骤 3 的 Armijo 型线搜索可在有限次计算后完成, 从而算法 1 是适定的.

证 反证法. 假设存在某个非负整数

令

引理 3.3 设 SFP 的解集

证 由

此结合 (3.1) 式知

根据

于是, 由 (2.5) 式和命题 3.4 知

其中, 上面第三个不等式利用了 (2.6) 式, (3.7) 式及 (3.8) 式, 最后一个不等式由

其中, 上面第三个等式由命题 3.3 得到, 最后一个不等式利用了

若

(i)

(ii)

定理 3.1 设

(i)

(ii)

证 记

于是, 根据 (3.6) 式得

由

推论 3.1 若定理 3.1 的条件成立, 则序列

证 由 (2.7) 式得

此结合 (2.2) 式和

另外, 根据定理 3.1(i) 知序列

下面给出本文的全局收敛性定理

定理 3.2 由算法 1 产生的迭代序列

证 首先, 证

此结合 (3.11) 式, 有

因此, 由

其次, 证明

由 (3.4) 式知

进而, 由

在 (3.4) 式中令

由算法 1步骤 3 的 Armijo 型线搜索准则知, 对充分大的

同理, 对上述不等式取极限, 并利用

成立.

最后, 验证

另一方面, 由(3.12) 式易得

此进一步表明整个序列

基于定理 3.2, 在如下假设条件下, 我们进一步讨论算法 1 的线性收敛速度.

假设 3.1 对序列

其中

注 3.1 若

则假设 3.1 成立. 事实上, 由柯西-施瓦茨不等式知

于是有

令

下面引理给出步长序列

引理 3.5 对于算法 1 产生的步长序列

证 令

令

故

借助于文献 [31] 关于线性收敛率的分析技巧, 下面建立算法 1 的线性收敛率.

定理 3.3 设假设 3.1 成立, 序列

(1) 若

(2) 若

(3) 若

证 结论 (1) 和结论 (2) 显然成立, 现证明结论 (3).

设

另一方面, 根据

根据

结合 (3.23) 式, (3.24) 式和 (3.25) 式, 当

其中

由 (2.1) 式知

进而,

其中,

此表明若

4 数值实验

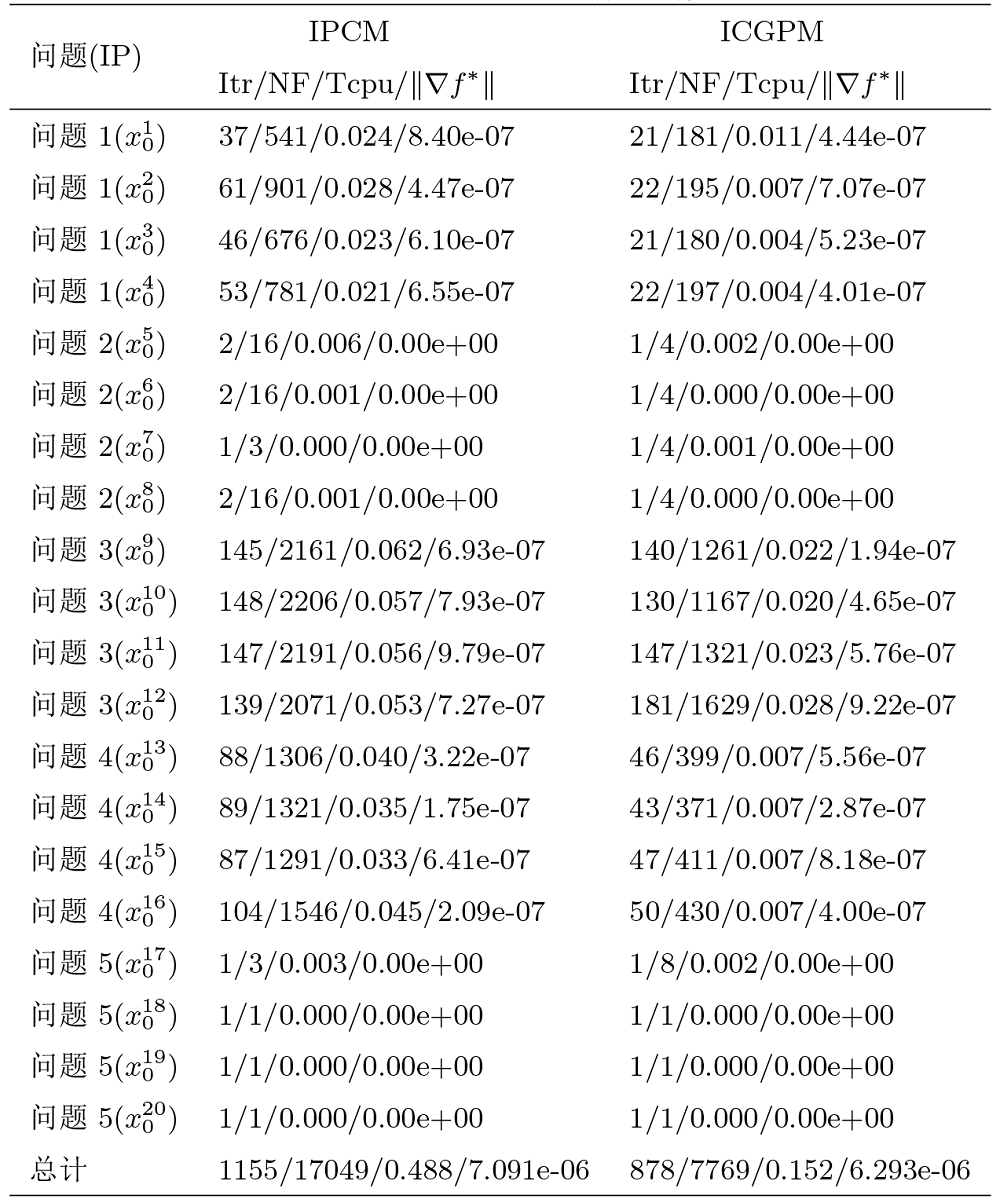

本节将通过 5 个例子检验算法 1 (ICGPM) 的数值效果. 所有程序代码利用 MATLAB R2017a 编写, 软件运行环境为 Windows 10 家庭中文版, Intel(R) Core(TM) i5-6200U, CPU 2.40GHz, 4GB RAM. 所有程序的终止准则为

本文将所提算法 ICGPM 和 IPCM[25]相比较. 测试问题如下

问题 1 求

问题 2 求

问题 3 求

问题 4 求

问题 5 求

经过反复调试参数, 本文选取两个算法较好的参数取值进行数值比较. 下面给出两个算法的具体参数设置

ICGPM:

IPCM:

在数值实验中, 每个测试问题所采用的初始点 (IP) 如下

问题 1:

问题 2:

问题 3:

问题 4:

问题 5:

(1) 本文所提算法 1 可有效求解 SFP;

(2) 基于问题 1 和问题 4 的数值结果可见, 本文所提算法 ICGPM 明显优于 IPCM;

(3) 从计算时间及解的质量看, 算法 1 迭代次数少、运行时间短, 且能获得更高质量的近似解.

5 结论和展望

本文基于非线性单调方程组的求解方法, 提出了求解分裂可行性问题的惯性共轭梯度投影法. 在较弱的条件下, 证明了算法的全局收敛性及

参考文献

A multiprojection algorithm using Bregman projections in a product space

Solving the split feasibility problem without prior knowledge of matrix norms

A note on the CQ algorithm for the split feasibility problem

A unified treatment of some iterative algorithms in signal processing and image reconstruction

Iterative oblique projection onto convex sets and the split feasibility problem

On variable-step relaxed projection algorithm for variational inequalities

Self-adaptive projection methods for the multiple-sets split feasibility problem

Cyclic algorithms for split feasibility problems in Hilbert spaces

A fixed point method for solving a split feasibility problem in Hilbert spaces

General splitting methods with linearization for the split feasibility problem

分裂可行性问题的外推加速线性交替方向乘子法及其全局收敛性

DOI:10.11896/jsjkx.230100009

[本文引用: 1]

This paper deals with a linear alternating direction multiplier method (ADMM) for Split feasibility problems (SFP).More specifically,the SFP has been formulated as a separable convex minimization problem with linear constraints,and then extrapolation accelerated linear ADMM has been proposed,which takes advantage of the separable structure,and then rising to sub-problems with closed-form solutions have been given.Furthermore,the global convergence of the proposed method is proved under some suitable conditions.Moreover,the algorithm has been tested by applying to two SFP examples in our theoretical results.

Extrapolation accelerated linear alternating direction multiplier method for split feasibility problems and its global convergence

DOI:10.11896/jsjkx.230100009

[本文引用: 1]

This paper deals with a linear alternating direction multiplier method (ADMM) for Split feasibility problems (SFP).More specifically,the SFP has been formulated as a separable convex minimization problem with linear constraints,and then extrapolation accelerated linear ADMM has been proposed,which takes advantage of the separable structure,and then rising to sub-problems with closed-form solutions have been given.Furthermore,the global convergence of the proposed method is proved under some suitable conditions.Moreover,the algorithm has been tested by applying to two SFP examples in our theoretical results.

Spectral gradient projection method for monotone nonlinear equations with convex constraints

凸约束非光滑方程组基于自适应线搜索的谱梯度投影算法

DOI:10.12286/jssx.2020.4.457

[本文引用: 1]

Based on three classic line search techniques for finding separating hyperplane, this paper proposes an adaptive line search method. Combining this with the spectral gradient projection method, a spectral gradient projection algorithm for nonsmooth monotone equations with convex constraints is proposed. The proposed method does not calculate and store any matrix, so it is suitable for solving large-scale nonsmooth monotone nonlinear equations. Under mild conditions, the global convergence of the proposed method is proved, and its rate of convergence is analyzed. Numerical experiments show that the proposed algorithm is efficient and robust.

A spectral gradient projection algorithm for convex constrained nonsmooth equations based on an adaptive line search

DOI:10.12286/jssx.2020.4.457

[本文引用: 1]

Based on three classic line search techniques for finding separating hyperplane, this paper proposes an adaptive line search method. Combining this with the spectral gradient projection method, a spectral gradient projection algorithm for nonsmooth monotone equations with convex constraints is proposed. The proposed method does not calculate and store any matrix, so it is suitable for solving large-scale nonsmooth monotone nonlinear equations. Under mild conditions, the global convergence of the proposed method is proved, and its rate of convergence is analyzed. Numerical experiments show that the proposed algorithm is efficient and robust.

New hybrid conjugate gradient projection method for the convex constrained equations

A class of conjugate gradient methods for convex constrained monotone equations

Three derivative-free projection methods for nonlinear equations with convex constraints

Multivariate spectral DY-type projection method for convex constrained nonlinear monotone equations

An adaptive family of projection methods for constrained monotone nonlinear equations with applications

A family of derivative-free conjugate gradient methods for constrained nonlinear equations and image restoration

A hybrid three-term conjugate gradient projection method for constrained nonlinear monotone equations with applications

Some methods of speeding up the convergence of iteration methods

Inertial relaxed CQ algorithms for solving a split feasibility problem in Hilbert spaces

Inertial projection and contraction methods for split feasibility problem applied to compressed sensing and image restoration

A family of inertial derivative-free projection methods for constrained nonlinear pseudo-monotone equations with applications

A family of hybrid conjugate gradient method with restart procedure for unconstrained optimizations and image restorations

Relative-error inertial-relaxed inexact versions of Douglas-Rachford and ADMM splitting algorithms

A modified inertial three-term conjugate gradient projection method for constrained nonlinear equations with applications in compressed sensing

The relaxed CQ algorithm solving the split feasibility problem

New inertial relaxed method for solving split feasibilities